Programming Software Engineering

Multitasking and Processes

Nowadays almost all operating systems are capable to run multiple programs simultaneously as opposed to running them sequentially.

Running multiple programs at the same time is referred as multitasking.

Each program is said to be performing a separate task, hence the term multitasking.

Each task is also known as running in a separate independent process.

To run multiple process at the same time, we don't necessarily need multiple physical processors or multiple cores. A single processor and even a single core can 'time share' among multiple processes. Nowadays processors run so fast that we have an illusion that all multiple processes are running in parallel.

Multiple process are usually initiated by user actions on operating system

If the computer has multiple processors/cores then different processes/tasks can take advantage of them and can run in truly parallel fashion.

Threads and Multithreading

Each process can create a sub-tasks, known as threads.

A thread is separate sequence of code instructions.

Multiple threads within the same process can communicate and share resources. The term concurrency refers to executing multiple threads at the same time.

Multithreading is used to improve performance of an application by dividing sequential instructions in multiple parts. For a typical GUI based application it's normal to create background threads to perform long running tasks without blocking the user interface and to make it more responsive.

A server based application also uses multiple threads to handle many requests simultaneously.

Context switch

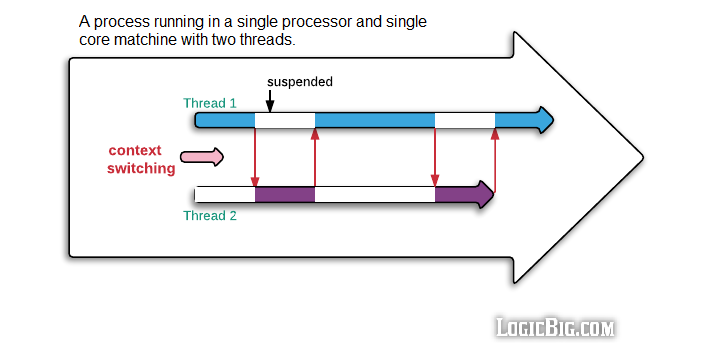

In a single processor environment, the CPU has to save the current thread/process state before switching to another thread/process. This is known as Context switch. Context switching is an essential feature of operating systems capable of multitasking.

Context switching is a costly process because of: scheduler spends extra CPU cycles/time in suspending the active thread temporarily so another thread's code can run, saving and restoring execution context and loss of locality.

Thread Scheduler

Thread Scheduler is responsible for context switching. It decides which waiting thread has to be chosen for the execution next.

The thread scheduler is the part of the OS.

Mutual Exclusion

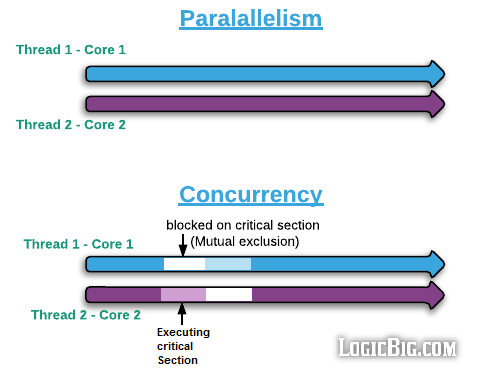

Mutual exclusion (mutex) refers to the requirement of ensuring that no two threads execute the critical code section at the same time. A critical section is part of the program which should be accessed by only one thread at a time. Typically, the critical section accesses a shared resource, such as a data structure, a peripheral device, or a network connection, that cannot operate correctly with multiple concurrent accesses.

Allowing multiple threads to access critical section at the same time results in race condition. A race condition occurred when a program logic does not execute in the order the developer intended. Race condition produces incorrect results.

A lock is usually used to enforce a mutual exclusion policy.

Mutual exclusion is also referred as thread synchronization.

Thread Contention

This is the situation where one thread is waiting for a mutual exclusion lock that is currently being held by another thread. Therefore, this waiting thread cannot use the locked resource until the other threads has unlocked it.

Disadvantage of thread contention is: it forces the scheduler to serialize operations, even if a free processor is available.

Concurrency vs Parallelism

Parallelism is running multiple threads in separate cores or processors so that context switching can be avoided. Also there's no communication between threads or no data is shared between the threads. Threads perform several computations independently. This requires hardware with multiple processors or core.

Concurrency is running multiple threads which share some data (or interfere: overlap their executions on some code). These threads cannot safely run without proper communication (synchronization). Sometimes it is referred as running multiple threads in a single core but that's not entirely accurate. The threads can run in multiple cores but they have to do proper synchronization between each other to achieve the goals.

MultiProcessor vs MultiCore vs Hyper-threading

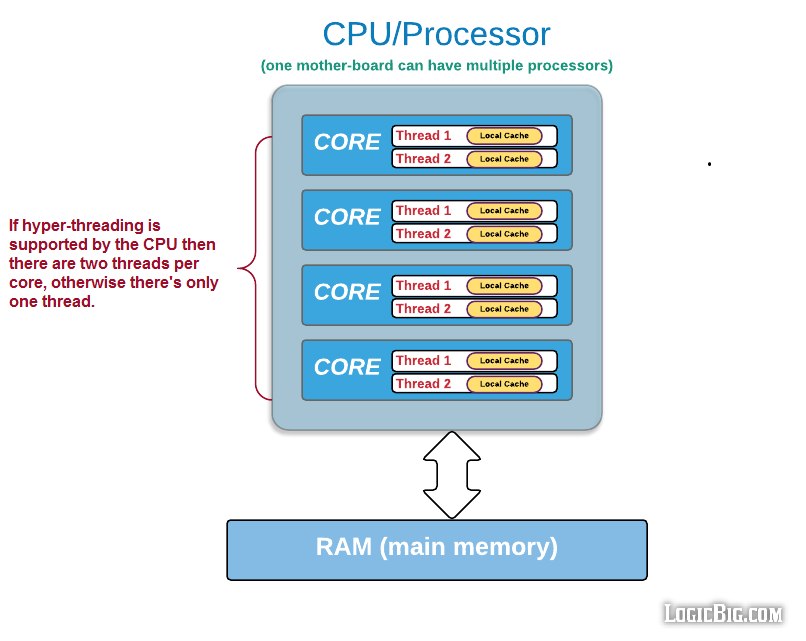

MultiProcessor is having separate multiple CPU units in a single machine (a single motherboard).

A single processor can have multiple core. Each core is capable to handle a separate execution of instructions. Hence if we are running a multithreaded application each thread can run in 'parallel' in each core.

A single core might be capable to run two parallel threads a well. This capability is known as hyper-threading. Each physical processor core is capable to run two threads simultaneously. The two threads share the core resources on hardware level. Operating system should optimize the use of hyper-threading. Operating system can also disable hyper-threading if it doesn't support it. Hyper-threading increases the number of independent instructions in the parallel. In a 4 Core processor there might be 8 logical processors.

Threads and CPU cache

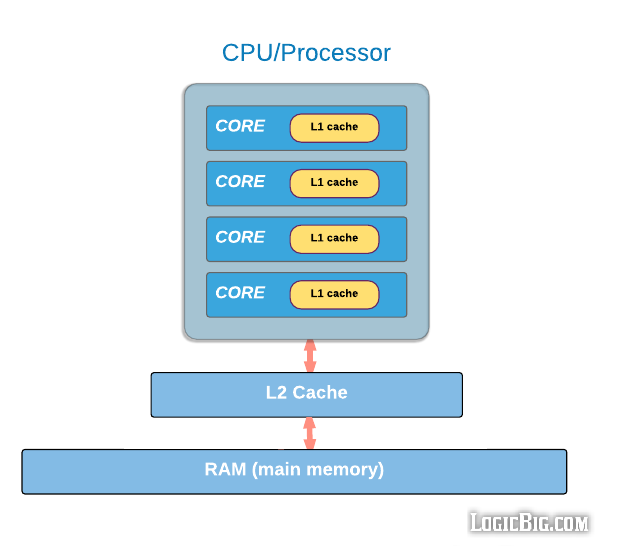

Depending on CPU Type, a fast cache is used to access commonly used data. CPU cache access is faster than main memory (RAM). The data cache is usually organized as a hierarchy of more cache levels L1, L2 etc.

L1-cache is the fastest cache and it usually comes within the processor chip itself. It uses the high-speed SRAM (static RAM) instead of the slower and cheaper DRAM (dynamic RAM) used for main memory. L2 cache comes between L1 and RAM and is bigger than L1. There might be a third level cache L3, depending on the CPU type.

Each core may have it's own L1 cache, so a thread running in each core has it's own local memory

Depending on CPU type each hyper-threading layer can have it's own private L1 cache. Multiple cores on smaller devices may not have their own L1 cache, that's because; having one cache per chip, rather than core, greatly reduces the amount of space needed, and thus smaller CPUs come with only larger intermediate shared cache chip like L2 shown in above diagram.

In a multithreaded program, multiple threads accessing critical sections and writing/reading shared data can be problematic if threads want to see the changes on shared variables made by other threads immediately. In those cases memory should immediately be made visible using native specific instructions. High level languages provide constructs to make sure variable visibility to other threads. In C, C++, C#, and Java programming languages the volatile keyword causes to flush or invalidate the local processor cache in order to make variable writes visible to other threads. On processor level, the memory flushing is also performed when lock and unlock (used for mutual exclusion) actions are taken.

|